By Gordon Rugg

Complex things often have simple causes. Here’s a classic example. It’s a fractal.

(From wikimedia)

Fractal images are so complex that there’s an entire area of mathematics specialising in them. However, the complex fractal image above comes from a single, simple equation.

Humour and sudden shocks are also complex, since they both depend on substantial knowledge about the world and about human behaviour, but, like fractals, the key to them comes from a very simple underlying mechanism. Here’s what it looks like. It’s called the Necker cube.

So what’s the Necker cube, and how is it involved with such emotive areas as humour and horror?

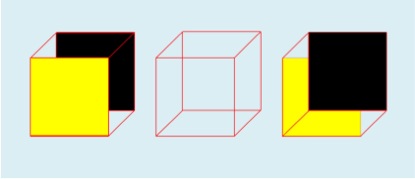

The Necker cube is a visual illusion named after Louis Necker, who published it in an illustration in 1832. The key feature is that it can be perceived in either of two ways, but not as anything in between; the human visual system treats it as an either/or choice, and will switch suddenly from one to the other, with no intermediate states. Here are the two ways of interpreting that central cube.

There’s been a lot of very interesting work on visual illusions, and on what they tell us about the workings of the human visual system and the human brain. The Necker cube would have earned its place in history just for its part in that work. However, researchers in other, very different, fields spotted some very practical ways in which the Necker cube gave important insights into otherwise puzzling phenomena.

Humour is a classic example of something that looks very complex. Humour draws on detailed knowledge about the world and about human behaviour, and it often involves complex mental models of what one person thinks that another person is thinking (known in some fields as Theory of Mind).

However, science has a habit of turning up very simple underlying explanations for phenomena that look very complex on the surface. Humour is one of those cases.

A key insight came when humour researchers realised that a lot of humour depends on the concept of script oppositeness. This involves having a situation that can be interpreted in one way, but that can also be interpreted in a very different, non-threatening, way. The punch line in a joke is where the listener suddenly realises what the alternative interpretation is; the greater the difference between the two interpretations, the funnier the joke is. This is why some people like practical jokes, which often begin with an apparently scary situation, but then resolve into a non-threatening one.

The same principle also works in the other direction. If you’ve been interpreting a set of events in one way that is unthreatening, and then you realise that there’s a different interpretation that is very threatening, you get a shock. Again, the bigger the difference between the two interpretations, the bigger the shock.

If you like evolutionary psychology, then there’s a neat parallel with this elsewhere in the animal world. Vervet monkeys have different alarm calls for different types of potential threat; they also have a call that means, in effect “Everything’s okay”. When they hear the “Everything’s okay” call after an alarm, they relax visibly. It’s plausible that human humour is just a more elaborate version of this process, and that laughter is just a more elaborate version of the sigh of relief.

You can represent this principle applied to the classic “1-2-3” joke visually with a set of Necker cube diagrams. That may sound like massive overkill for a simple joke, but it actually gives some deep, powerful insights into much further-reaching issues. Here’s how it works. I’ll do the joke first, in what will probably feel like mind-numbing detail, and then look at the bigger picture.

First you have the introduction that sets the scene. It only gives you part of the information, and it points you in one direction – in this case, the direction of assuming that the cube will have a yellow front.

Next, you get another piece of information that’s consistent with the first, and that moves you further towards expecting a cube with a yellow front.

The next step in this pattern would be another square of yellow, in the bottom left corner, covering the red diagonal, and then another batch of yellows further up, taking you towards a final yellow front, like this.

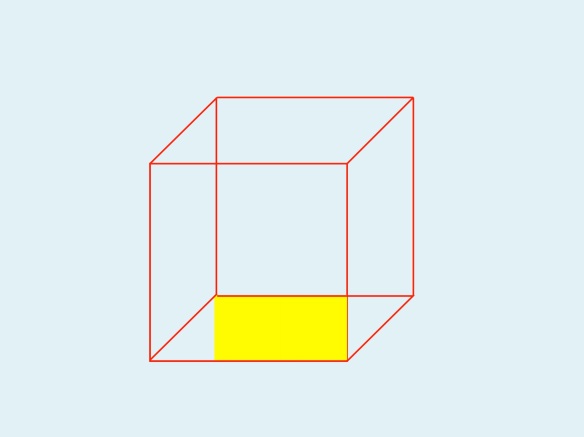

Instead, you get the punch line. You’re given another piece of information that suddenly tells you that your first interpretation was plausible, but wrong.

That one small area of black tells you that your first interpretation was wrong, and points you towards a very different interpretation, namely this one.

So what?

You see exactly the same sequence in a lot of real-world cases, both funny cases and scary, and many that are both funny and scary at the same time.

A classic ancient example that I used in my book Blind Spot is the battle of Cannae, where Hannibal was fighting a larger Roman army. The first two stages of the battle went exactly as the Romans were expecting. First, Hannibal’s cavalry wiped out the Roman cavalry, which was what usually happened. Second, and much more important from the viewpoint of Roman expectations, the Roman army pushed back the centre of Hannibal’s army until it was bowed deeply back in the centre, near to breaking point. Once Hannibal’s line broke, the Romans would be able to wipe out the pieces one by one.

Then came the punch line. Hannibal’s cavalry suddenly reappeared behind the Romans. The Romans suddenly had the Necker shift experience of realising that they had just got themselves surrounded on three sides, by pushing Hannibal’s line far back in the centre, and that Hannibal’s cavalry had now surrounded them on the last remaining side. By pushing forward so far, they had actually fought their way into a trap. A few Romans managed to fight their way out before the trap fully closed; the rest were slaughtered to the last man. About 60,000 were killed – more than the US death toll for the entire Vietnam War, killed in a single day.

A lot of disasters follow that pattern. First there’s the evidence pointing towards everything being normal. Then there’s the first sign that something’s not right, followed by the Necker shift and that sinking feeling in the gut, from the sudden realisation that events have just taken a very unpleasant turn for the worse.

One of my favourite examples is a classic in the disaster literature, for numerous reasons. It’s called the Gimli Glider. It’s an airliner that ran out of fuel in mid air, because the flight crew thought the fuel figures were in kilos, when actually they were in pounds. The pilot then treated the airliner as a glider, and brought it to a safe but eventful landing at the disused Gimli airfield. The pilot and first officer were first punished for the mistake with the fuel, and then given medals for saving everyone via some classic great flying.

That case illustrates a lot of points where Necker shifts are key features.

Does your mistake look sensible?

The first point was the mistake with the fuel. It wasn’t a simple case of oversight. The flight crew checked the fuel numbers and then double-checked them, following procedures correctly. However, for a long and tangled chain of reasons, they had been given faulty information, so they ended up with results that looked plausible and that had come out of the correct procedures, but that were still wrong.

That’s a common feature of bad design. There are numerous ways in which a design can be bad. One of the most serious is ambiguity. If an indicator can be plausibly misinterpreted, then sooner or later it will be, and because it’s plausible, the misinterpretation probably won’t be caught immediately.

In the Gimli case, the instrumentation showed a figure of 22,300 for the fuel weight, which would look perfectly sensible for their intended flight, if that figure was in kilos, which was what they were expecting. Instead, they had loaded with 22,300 pounds, which was nowhere near enough. Ironically, if the instrumentation had been completely wrong, with a figure such as 2,230, then that would probably have been spotted immediately; however, an ambiguous and plausible mistake wasn’t spotted. (For readers familiar with the full details of this story, yes, the full story is indeed more complicated, but this is the take-home message from it as regards good design.)

Knowing whether to laugh or cry

There are numerous points in the Gimli story where the situation is simultaneously funny and potentially tragic. This is a common feature in real disasters. For instance, when the Gimli glider was coming down to land, there was a social event on the disused runway. Some children were riding their bicycles on the runway, right in the path of the incoming airliner. When they realised that the plane was coming toward them, they started frantically pedalling as hard as they could, in the worst possible direction. With hindsight, it’s funny; at the time, though, the outcome could have been very different.

One common coping strategy among people who work with death, disaster and tragedy is to develop a sardonic sense of humour. In the film Falling from the Sky: Flight 174, which was based on the Gimli Glider, the pilot and first officer decide not to use one possible route because it would risk a crash landing in the city of Winnipeg; one of them comments to the other that this would look bad on their resumes. It’s a great line at several levels, and humour like this helps keep the cool, level head that’s needed in such situations, but it can easily be mistaken by outsiders for callousness. Various cultures value a type of understated humour very similar to this, and there’s an interesting literature on the role of societal cultures in views of risk and safety.

What are we looking at here?

A central theme in the discussion above is having more than one possible interpretation for the situation. That raises the question of where we get those interpretations from, which in turn takes us to schema theory and script theory, which were the topic of my previous article. A schema is a mental template for organising and categorising information; a script in this context is a mental template for a standard series of actions.

The first set of information that you see when you meet something new is disproportionately important, because at the start, it’s the only information you have. That sounds fairly obvious, which it is. However, the problem is that your brain will be looking for the appropriate schema to use, and once it’s chosen a schema, it’s reluctant to change to a different schema unless there’s a very visible need to change.

This is why first impressions are disproportionately important, and why the way that a problem is first presented will have a long-lasting effect on how it will be tackled. It’s how we decide things like which type of movie we’re seeing, usually within the first few seconds of viewing.

This is also why people often persist with an incorrect idea of what’s happening long after it’s clear to an outsider that there’s a better option available. The human brain is very good at finding evidence consistent with that first choice of schema, and very good at ignoring inconvenient evidence that doesn’t fit. This tendency is known as confirmation bias, and it’s so widespread that I’m now surprised when I don’t find it when I start looking at the previous literature on a problem.

The uncanny valley revisited

The first impression is disproportionately important. What happens, though, when that first impression is equally balanced between two very different interpretations, rather than pointing you towards a single, wrong, interpretation?

That situation would look something like this on our Necker cube visualisation.

It’s not quite the same as our initial wireframe Necker cube, since we have some information about which colours are involved. However, there are equal amounts of information pointing in opposite directions.

In an unthreatening situation like viewing a diagram on a page, this is a mildly interesting or mildly irritating uncertainty, depending on your mood or viewpoint. In real life situations, though, this type of uncertainty can be profoundly unsettling, and potentially dangerous (as in the classic uncomfortable question of whether the person walking towards you in a lonely street is a harmless pedestrian or a criminal who sees you as their next victim).

One milder but still unsettling example of this is the uncanny valley, a concept first popularised by the Japanese robotics researcher Mori. He found that up to a certain point, the more human-like a robot was, the more it was liked by people. However, there was a point where suddenly people started to find a robot very disconcerting because it was neither clearly a robot nor clearly a human. That feeling reduced once the robot passed another threshold, and could pass visually for a human. Mori termed this discomfort zone the uncanny valley.

This concept has been adopted by researchers in a wide range of other fields, since it offers a plausible explanation for the role of humanoid monsters in movies, folklore and popular culture – an uncanny valley marking the edges of the human landscape, and the start of the non-human landscape.

It also provides a plausible explanation for why some completely human phenomena are profoundly unsettling. An example of this is the discomfort that many people feel when they see images of child beauty pageant contestants, where the schema for “child” (small, “natural” and asexual) comes into sharp contrast with the schema for “beauty queen” (tall, with sophisticated makeup, dress and hair style, and highly sexualised). Most viewers don’t have a well-established mental pigeonhole specifically for “child beauty pageant contestant”, so they end up in a rapid and uncomfortable Necker shift alternation between the “child” schema and the “beauty queen” schema.

Conclusion

The Necker shift is a concept that we’ve found extremely useful in our work. It gives powerful insights into assorted types of error and misunderstanding, and into a wide range of other phenomena, ranging from comedy to horror.

In this article, I’ve looked at how this concept relates to schema theory and script theory. There are numerous other interesting questions, such as which features are the classic indicators of different types of schema and script, but those will need to wait for another article…

Notes and links

The fractal image in this article is a close-up of the one here:

http://upload.wikimedia.org/wikipedia/commons/1/17/Julia_set_%28highres_01%29.jpg

There’s an interesting recent post by Mano Singham on what visual illusions tell us about how the brain works, over on Freethought Blogs:

http://freethoughtblogs.com/singham/2013/08/30/seeing-our-own-brains-at-work/

There’s a collection of edited highlights from a documentary about the Gimli Glider here on YouTube:

https://www.youtube.com/watch?v=4yvUi7OAOL4

My previous article about schema theory and script theory is here:

Schema theory, scripts, and mental templates: An introduction

Blind Spot is available on Amazon:

AAll images above are copyleft Hyde & Rugg, unless otherwise stated. You’re welcome to use the copyleft images for any non-commercial purpose, including lectures, provided that you state that they’re copyleft Hyde & Rugg.

Pingback: Parsing designs, and making designs interesting | hyde and rugg

Pingback: Academic writing and fairy tales | hyde and rugg

Pingback: One hundred Hyde & Rugg articles, and the Verifier framework | hyde and rugg

Pingback: In-groups, out-groups and the Other | hyde and rugg

Pingback: The Uncanny Valley, Proust, Segways and the living dead | hyde and rugg

Pingback: Things people think | hyde and rugg

Pingback: Why Hollywood gets it wrong: Conflicting conventions | hyde and rugg

Pingback: The Knowledge Modelling Book | hyde and rugg

Pingback: A cheering tale: The Gimli Glider | hyde and rugg

Pingback: How much is too much? | hyde and rugg

Pingback: Strange places | hyde and rugg

Pingback: Why Hollywood gets it wrong, part 2 | hyde and rugg

Pingback: Why Hollywood gets it wrong, part 4 | hyde and rugg

Pingback: Mental models, worldviews, and the span of consistency | hyde and rugg

Pingback: Desire, novelty, and the attractions of safe Necker shifts | hyde and rugg

Pingback: Beauty, novelty and threat | hyde and rugg

Pingback: Modeling misunderstandings | hyde and rugg

Pingback: Liking, disliking, and averaging: Why average things are attractive but very attractive things are not average | hyde and rugg

Pingback: Presentations 101 | hyde and rugg

Pingback: The persistence of old inventions | hyde and rugg